Last November I attended the Formula 1 race in Las Vegas. Watching cars hit 200+ mph made me think about something unexpected: speed only works when safety is engineered into the system.

That lesson applies directly to AI.

Watching the cars fly down the strip at more than 200 miles per hour is hard to describe if you’ve never experienced it in person.

The speed is breathtaking.

But what struck me most wasn’t just the speed.

It was how much engineering exists to protect a single human life.

Modern Formula 1 cars are engineering marvels designed to protect drivers at extreme speeds. Over the decades the sport has introduced innovations like crash structures, telemetry monitoring, and the Halo cockpit protection system. Each new safety measure sparked debate at first. Some argued it would slow the sport down.

But history has shown the opposite. The safer the sport became, the faster the cars were able to go.

And it got me thinking about something else moving incredibly fast right now: Artificial Intelligence.

Responsible AI Starts with People

AI adoption is accelerating across organizations.

Teams are experimenting.

Leaders are evaluating risk.

Policies are still catching up.

The real question isn’t whether AI will be used. It’s how organizations ensure AI is used responsibly while still encouraging innovation.

Responsible AI is often framed as governance or compliance, but at its core it’s something broader. Responsible AI isn’t about protecting models or algorithms – it’s about protecting people. The goal is to design AI systems that improve human outcomes, preserve trust, reduce waste, and avoid unintended harm.

That requires stewardship.

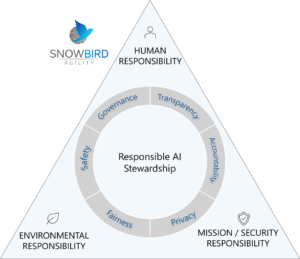

The Responsible AI Stewardship Model

Responsible AI requires balancing responsibility across three domains:

- Human Responsibility

Protect people, dignity, fairness, and human accountability - Mission / Security Responsibility

Ensure trust, reliability, transparency, and alignment with laws and policies - Environmental Responsibility

Use AI infrastructure responsibly and sustainably

When these responsibilities are balanced, organizations can move forward with confidence.

Operational Principles

In practice, this stewardship shows up through several key principles:

- Human-Centered

AI should augment human capability, not replace human responsibility - Safety

AI systems should behave predictably and minimize the risk of harm - Quality

Outputs should meet defined standards and produce reliable results - Consistency

AI systems should behave reliably across similar situations and use cases - Transparency

People should understand when AI is being used and how it contributes to outcomes - Ethics

AI should reflect the values and expectations of the organizations and communities it serves - Sustainability

AI development and usage should consider energy use and environmental impact

These principles help ensure AI improves human outcomes, preserves trust, reduces waste, and avoids unintended harm.

Speed With Confidence

The lesson from Formula 1 is simple. Speed without safety eventually stops the race. But when safety is engineered into the system, speed becomes sustainable. Responsible AI works the same way. When organizations embed responsible stewardship into how AI operates, something important happens.

Teams stop asking:

“Are we allowed to use AI?”

And start asking:

“How can we use AI better?”

And that’s when innovation really accelerates.

Dan Foster has more than 25 years of experience in information technology and services, specializing in business agility transformation, Lean-Agile frameworks, and AI-enabled operating models. As a Transformation Leader at Snowbird Agility, Inc., he partners with executives, portfolios, and delivery teams to implement SAFe®, align strategy to execution, and improve flow, predictability, and measurable outcomes.

He may be reached at [email protected]

Tom Munro

Tom Munro Mike Kleiman

Mike Kleiman