The following article by Snowbird Senior Agilist Jason Blake appeared as a Feature in Orange Slices AI on April 2, 2026

(as a government-focused follow up to Part II in our series)

In the first article of this series: “Speed Requires Stewardship”, we explored a critical idea:

Speed without stewardship is a liability.

In Formula 1, the fastest teams don’t just push performance—they engineer systems that can operate safely at extreme speeds. Precision, telemetry, and governance are not constraints. They are what make speed possible.

The same principle applies to AI—especially within federal agencies, where speed must be balanced with accountability, transparency, and public trust. As agencies accelerate adoption, the question is no longer whether to use AI—but:

Are we becoming intelligent organizations—or simply using intelligent tools?

The Shift: From Tools to Mission Systems

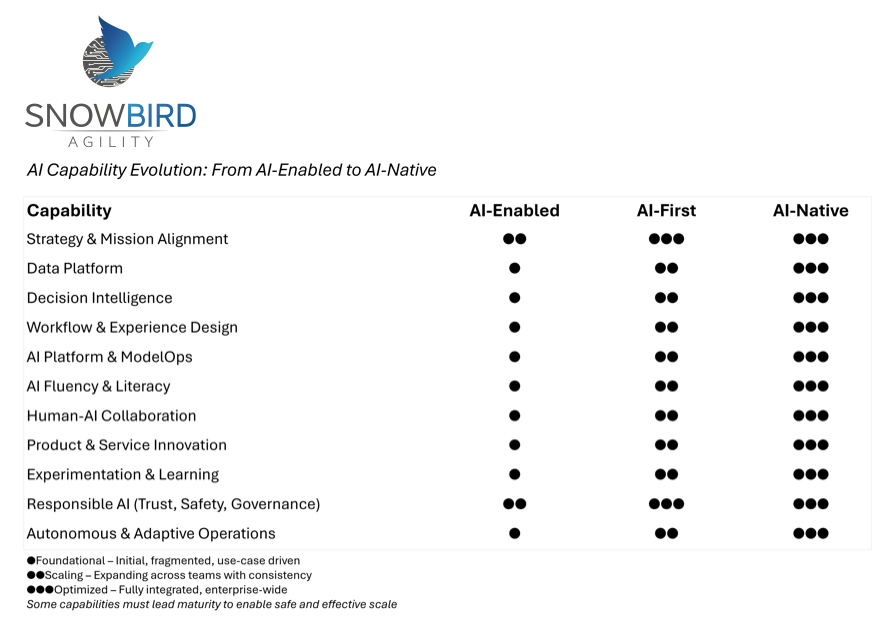

Most agencies today are AI-enabled.

They are embedding AI into existing workflows—automating tasks, summarizing information, and improving operational efficiency. For example, agencies are increasingly using AI to process large volumes of documents, such as Freedom of Information Act (FOIA) requests. The Freedom of Information Act (FOIA) gives the public the right to request access to government records—often creating significant document review workloads. Several federal agencies, including the U.S. Department of Justice, have piloted AI to assist with FOIA document review and redaction. While these tools reduce backlog and processing time, they also introduce risks around accuracy and completeness—highlighting the need for strong validation and oversight.

AI-enabled agencies are asking:

“How can AI help us do what we already do—faster?”

The federal government’s growing portfolio of AI use cases highlights a clear shift—from isolated tools toward enterprise-wide intelligence—raising the stakes for responsible implementation.

— AI.gov

The next stage is AI-first. Here, AI begins to shape how decisions are made—not just how work is completed.

For federal agencies, this often includes mission-critical areas such as resource prioritization and oversight. For example, the Centers for Medicare & Medicaid Services uses AI-driven fraud detection to identify suspicious billing patterns and prioritize investigations. This enables more effective use of limited resources—but also introduces the need for careful oversight to avoid false positives and ensure fair, explainable decision-making.

At this stage, the challenge shifts from efficiency to accountability.

Without clear governance, agencies risk:

- Biased or opaque prioritization decisions

- Lack of explainability in mission-critical actions

- Misalignment with policy or regulatory expectations

AI-first agencies are asking:

“Where should AI guide how we prioritize and decide?”

The real transformation, however, is AI-native. This is where the Formula 1 analogy fully applies. An F1 team doesn’t optimize individual components—it builds an integrated system where the car, telemetry, race strategy, and driver operate as a coordinated intelligence system in real time.

Similarly, an AI-native agency doesn’t just automate steps—it redesigns mission delivery itself.

Consider end-to-end case management. The U.S. Department of Veterans Affairs has applied AI to modernize veterans’ benefits processing—from document classification to decision support. This reflects a shift toward reimagining the entire case lifecycle, where intelligence supports intake, prioritization, adjudication, and continuous learning.

But this level of transformation introduces new risks if not properly governed:

- Over-reliance on automated recommendations

- Lack of transparency in decision logic

- Challenges in accountability for outcomes

AI-native agencies ask: “If intelligence were embedded across the entire mission lifecycle, how would we design this system responsibly?”

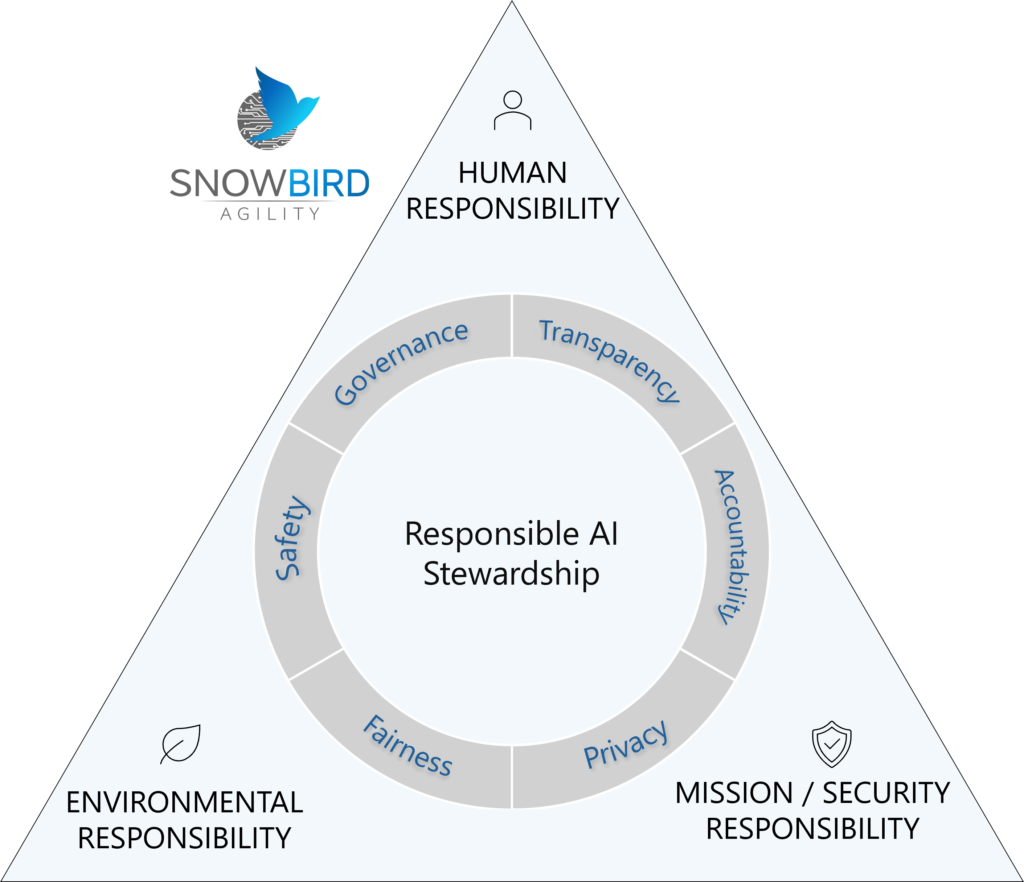

Why Responsible AI Is the Enabler of Scale

As agencies mature in their AI adoption, a common concern emerges: That Responsible AI will slow innovation.

In reality, it enables it.

In Formula 1, governance and engineering discipline are what allow teams to operate safely at the edge of performance. Without them, speed leads to failure.

In federal environments, the stakes are even higher.

AI systems influence decisions that impact:

- Citizen services

- Regulatory enforcement

- Public safety

- Allocation of taxpayer resources

Responsible AI ensures that these systems are:

- Transparent

- Explainable

- Fair

- Secure

It is not a compliance exercise. It is the stewardship layer that enables agencies to scale AI with confidence and maintain public trust.

What Actually Changes

In Formula 1, the difference between a fast car and a championship team isn’t components – it’s integration. The same is true here.

The transition from AI-enabled to AI-native is not just technological—it is organizational:

- From AI as a tool → to AI as a design principle

- From isolated use cases → to mission-wide intelligence flows

- From human-only decisions → to human-AI collaboration

- From static processes → to adaptive, learning systems

This is the difference between improving operations and transforming how agencies deliver on their mission.

A Reflection for Leaders

To understand where your agency stands, consider:

- Are we using AI within individual programs—or embedding it across mission delivery?

- Are we automating existing processes—or redesigning them?

- Where are AI-driven decisions impacting citizens—and how are those decisions governed?

- Do we have clear accountability for AI-supported decisions in high-risk areas?

These questions help reveal whether your organization is AI-enabled, AI-first, or moving toward AI-native.

What Comes Next: The AI Maturity Ladder

Responsible AI provides the guardrails.

But agencies still need a structured path forward.

In the next article, we’ll explore: “AI Maturity – The AI Maturity Ladder”

A practical framework for understanding how agencies evolve across these stages—and the capabilities required to do so responsibly.

Because in the age of AI, success is no longer defined by adoption…but by how deliberately agencies evolve their mission systems around intelligence—while maintaining trust, accountability, and stewardship.

View a AI-Enabled to AI-Native Chart here

Jason Blake is a Senior Agilist and Scaled Agile AI-Native Instructor at Snowbird Agility. He may be reached at [email protected]

Sources

- U.S. Department of Justice – Office of Information Policy (FOIA modernization efforts):

https://www.justice.gov/oip - National Archives and Records Administration – FOIA Advisory Committee:

https://www.archives.gov/ogis/foia-advisory-committee - Centers for Medicare & Medicaid Services – Fraud Prevention System:

https://www.cms.gov/About-CMS/Components/CPI/CPIReportingFraud - Government Accountability Office – Medicare Fraud Analytics Report:

https://www.gao.gov/products/gao-21-105309 - U.S. Department of Veterans Affairs – AI and benefits modernization:

https://www.va.gov/opa/pressrel/pressrelease.cfm?id=5702 - AI.gov – Federal AI Use Case Inventory:

https://www.ai.gov/ai-use-cases/

Tom Munro

Tom Munro Mike Kleiman

Mike Kleiman